Machine Learning in Ableton LIVE

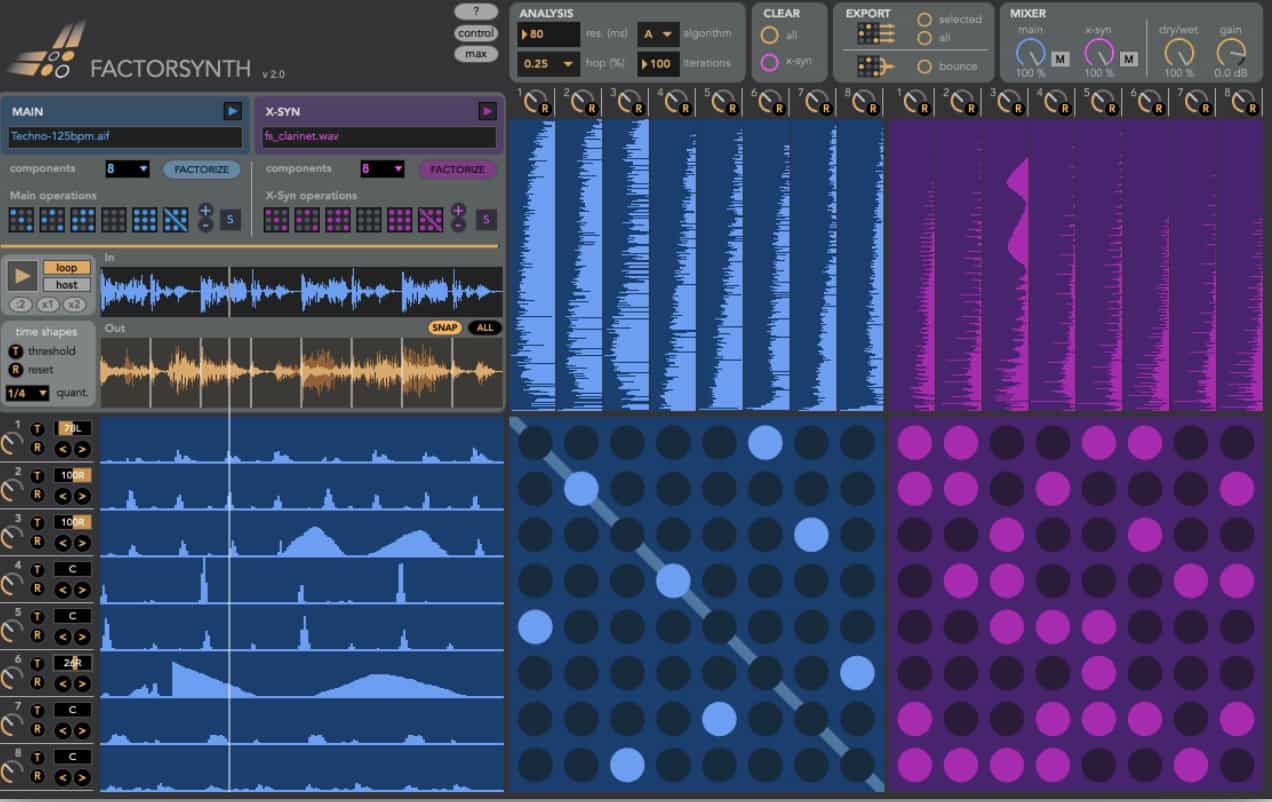

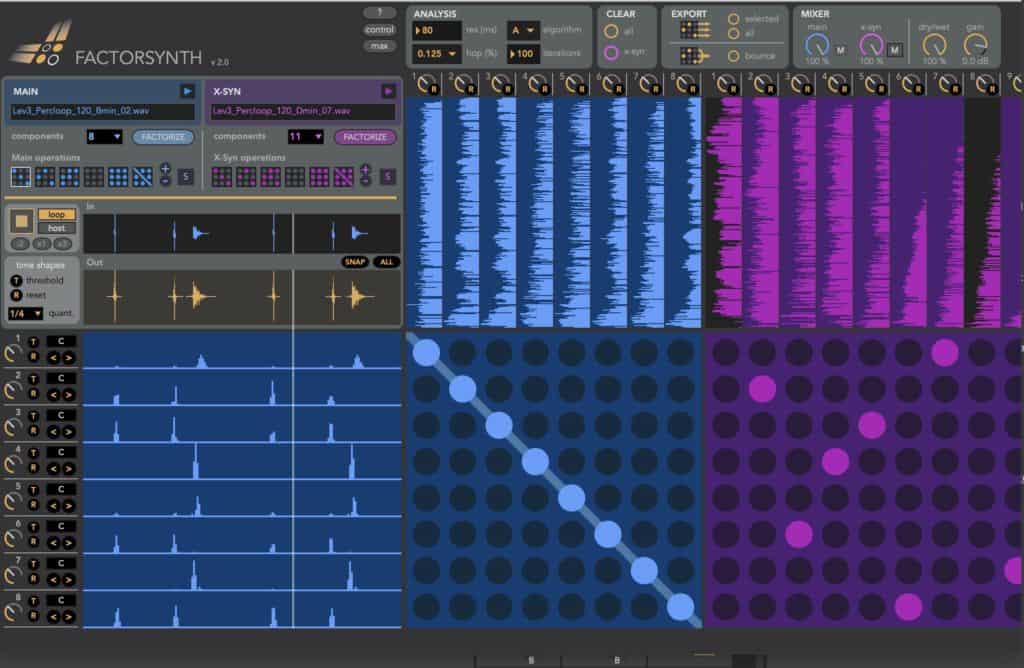

Machine learning comes to Ableton Live with Factorsynth, a Max For Live device that uses a data analysis algorithm called matrix factorization to decompose any audio clip into a set of temporal and spectral elements. By rearranging and modifying these components you can do powerful transformations to your clips, such as removing notes or motifs, creating new ones, randomizing melodies or timbres, changing rhythmic patterns, remixing loops in real-time, applying effects selectively only to certain elements of the sound, creating complex sound textures

Machine Learning

Factorsynth is a new kind of musical tool. It uses machine learning techniques to decompose any input sound into a set of temporal and spectral elements.

By rearranging and modifying these elements you can do powerful transformations to your clips, such as removing notes or motifs, creating new ones, randomizing melodies or timbres, changing rhythmic patterns, remixing loops in real time, creating complex sound textures…

Factorsynth is a one-of-its-kind device that uses machine learning to deconstruct any sound into elements. After 2 years of the initial release comes Factorsynth 2, the first major update. Following many user suggestions and requests, version 2 is an even more versatile yet easier to use device, with a simplified workflow and numerous new features.

It is now possible to individually pan the components, allowing to do things such as upmixing a mono clip to stereo. Another powerful new feature is the quantized shifting of the components, which allows changing the rhythmic structure of riffs and drum loops. A second, alternative decomposition algorithm is available, as well as a more detailed control of the playback region.

Factorsynth Workflow

Unlike traditional audio effect devices, which take the track’s audio as input and generate output in real-time,

Factorsynth is a clip-based device. It works on audio clips from your Live set that has been loaded into Factorsynth by drag and drop. Once an audio clip has been loaded into Factorsynth, it will be decomposed into

elements (exactly how to do this will be covered in the next sections). The decomposition process is called

factorization because it is based on a technique called non-negative matrix factorization (NMF).

Factorsynth Allows You to

- Remove certain elements (notes, drum hits) from clips

- Change the rhythm of a drum loop by separately displacing its components (kick drum, snare, etc)

- Randomize the timbre and the internal temporal structure of sounds

- Create infinite rhythm and timbre variations of your loops while mixing in session view

- Create complex textures out of a song excerpt

- Create new elements consistent with the overall timbre and rhythm of the original sound

- Upmix mono clips to stereo clips by panning the individual components

- Apply audio effects selectively only to certain elements of the sound (e.g. apply reverb only to the snare)

- Separately process attack and sustain portions of notes, or consonants in voice signals.

- Obtain hybrid sounds by using a new kind of component cross-synthesis (e.g. making each drum element

- drive a separate timbre element of a second sound)

- Discover hidden components in sounds that you didn’t notice before

About JJBurred

J.J. Burred is an independent researcher, software developer and musician based in Paris. With a background in machine learning and signal processing, his work aims at developing innovative tools for music and sound creation, analysis and search. After earning a PhD from the Technical University of Berlin, he worked as a researcher at IRCAM and Audionamix, on topics such as source separation, automatic music analysis, sound classification, content-based search and sound synthesis. His current main activity concerns the exploration of machine learning techniques for new methods of sound analysis/synthesis aimed at musical creation.

Logitech Brio 4K Webcam, Video Calling, Noise-Cancelling mic, HD Auto Light Correction, Wide Field of View, Windows Hello Works with Microsoft Teams, Zoom, Google Meet, PC/Mac/Laptop/MacBook/Tablet

Spectacular video quality: superb resolution, frame rate, color, and detail, featuring autofocus and 5x digital zoom; this Ultra...

As an affiliate, we earn on qualifying purchases.

TONGVEO 4K PTZ Conference Room Camera System AI Auto-Tracking 20x Zoom 4K PTZ Camera USB3.0 HDMI LAN outputs and Bluetooth Speakerphone with Microphone for Large Remote Meeting

✈【4K PTZ Conference room camera system】TONGVEO 4K PTZ Conference room camera system combines an upgraded 20x Zoom AI-auto...

As an affiliate, we earn on qualifying purchases.

TONGVEO 3-in-1 4K Webcam with Microphones and Speaker, AI Auto-Tracking 5X Digital Zoom Webcam 4K Adjustable Field of View Remote Control Works with Microsoft Teams, Zoom, Google Meet, PC Mac Laptop

✈【3-in-One 4K Webcam】TONGVEO 4K webcam with microphone and speaker features FHD 4K lens, adopts 1/2.8 inch 8.29 Megapixel...

As an affiliate, we earn on qualifying purchases.

WYRESTORM 4K AI Tracking Conference Webcam, Auto Framing & Presenter Tracking, 120° Wide Angle, Dual Noise Cancelling Mics, Privacy Cover, USB Plug & Play, Zoom Certified for Teams Meeting Rooms

【Built for Small Conference Rooms】Designed specifically for small meeting spaces, this conference room camera system ensures every participant...

As an affiliate, we earn on qualifying purchases.